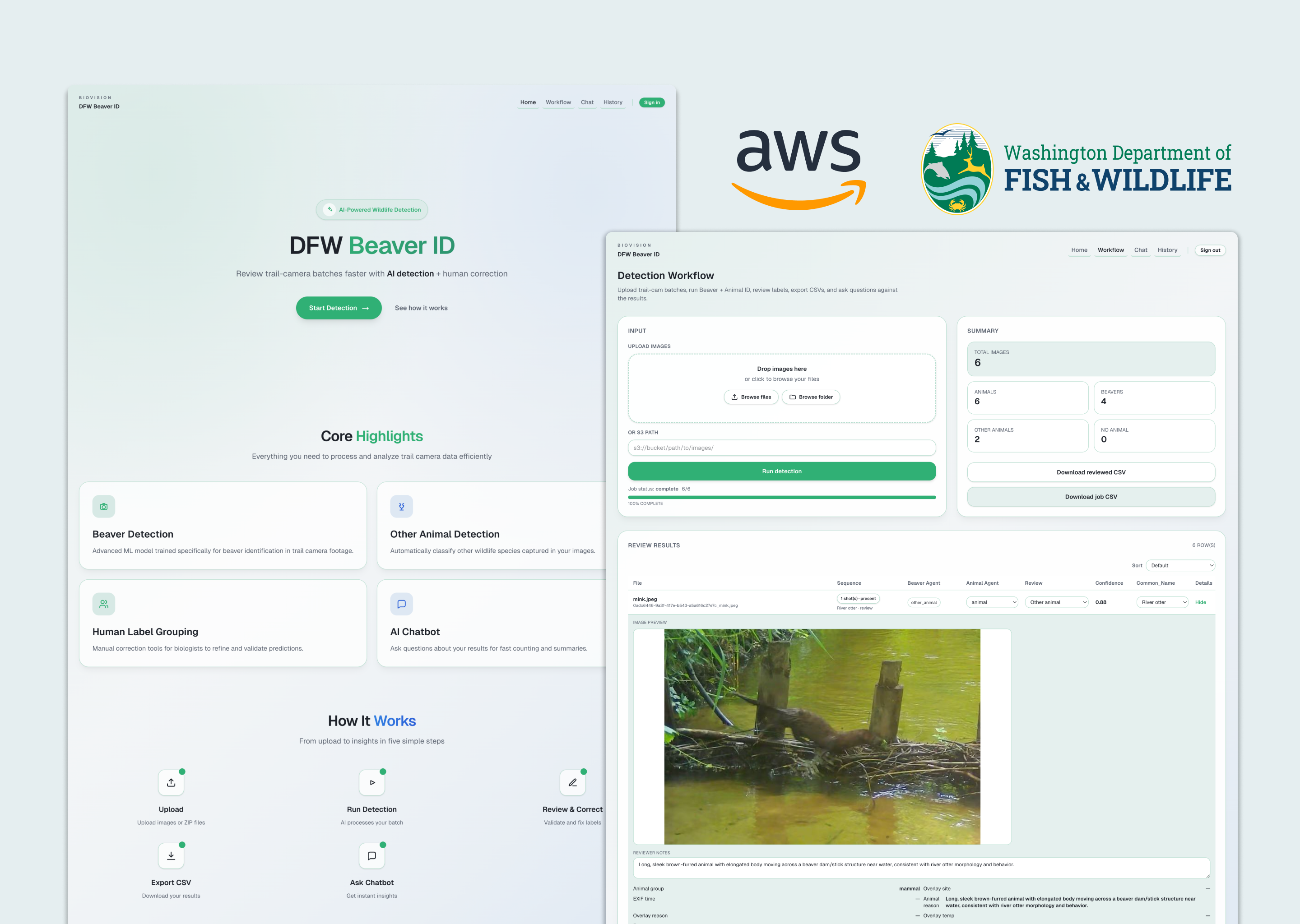

BioVision

TL;DR

Problem

Wildlife researchers spend enormous time manually reviewing thousands of trail camera images to identify beavers — a slow, error-prone process that delays research insights by weeks.

Solution

Designed BioVision, an AI-powered system that detects wildlife animals in large-scale camera trap datasets and streamlines wildlife monitoring through an AI + human verification workflow.

Impact

0→1

Designed a full AI-assisted wildlife research workflow platform from scratch.

↓ 0%

Reduced manual image review time through automated beaver detection.

↓ 0%

Manual correction rate halved following the introduction of the dual-agent pipeline.

Outcome

01

Beaver and Species Identification

02

AI Chatbot

03

History

04

Manual Review & CSV Export

A critical species, invisible to existing tools

Beavers play a critical role in restoring stream ecosystems. By building dams, they slow water flow, increase water retention, and create habitats that support salmon migration and spawning. As a result, monitoring beaver activity has become an important indicator for ecological restoration efforts.

Wildlife researchers deploy camera traps along streams to observe animal activity, but these cameras generate thousands of images that must be manually reviewed. Identifying beavers within these large datasets is time-consuming and difficult to scale, creating a major bottleneck for ecological monitoring.

Every model we tried failed — so we built our own approach

01

Understanding the biologist's workflow

We conducted in-depth interviews with the wildlife biologists who serve as our primary stakeholders. Their core workflow involves deploying camera traps in the field. Every few months, they retrieve the SD cards and manually review all captured images on their own computers.

Key Pain Points

- Large volumes of images must be reviewed manually

- Many images contain empty scenes triggered by birds or motion

- Researchers must manually distinguish beavers from similar species such as nutria, mink, and otters

02

Market & Tool Audit

We evaluated open-source wildlife detection models available on the market. One widely cited model, SpeciesNet, claimed general animal detection capability, but when tested against our dataset, it failed to register beavers as animals at all. Because beavers are often only partially visible in frame and appear in low-contrast grayscale images, the model's detection pipeline broke down at the very first step.

This was a critical finding: if a model cannot identify a beaver as an animal, it has no chance of classifying it at the species level. No existing off-the-shelf tool could reliably handle our use case.

Key Insights

- Many models failed to detect beavers when only partial body parts were visible

- Most images were nighttime grayscale photos, significantly reducing detection performance

- Existing animal-detection models often failed to recognize beavers as animals at all

03

Technical Iteration

Armed with a labeled dataset provided by stakeholders (approximately 600–700 images), the team ran three successive technical experiments before arriving at the final approach.

Dataset too small; poor generalization

~80% accuracy but recall remained poor

Flexible, no retraining needed

04

Scope Evolution — Two late requirements reinforced the pivot

During the research process, stakeholders introduced two additional requirements that further shaped the technical direction:

Sequence-based detection

Camera traps fire in bursts of three consecutive frames. A beaver may appear in only one of three shots. Stakeholders needed detection to operate at the sequence level — not per-image — to avoid false negatives across a capture event.

General animal pre-filtering

The desired workflow was two-stage: first filter for any animal presence, then identify beaver specifically. But as established in the tool audit, no reliable general-purpose animal detector existed that could handle our image conditions. This reinforced the case for an AI agent capable of reasoning across both steps simultaneously.

Design Opportunity

Instead of relying solely on traditional machine learning models, we explored an AI-assisted detection agent that could analyze camera trap images using contextual reasoning and sequence-based understanding. This approach would allow the system to:

- Identify animals even when partially visible

- Reason across image sequences

- Better handle low-light grayscale images

Design Process

01

Upload & Detection

Pain point: manually reviewing large volumes of images one by one is extremely inefficient.

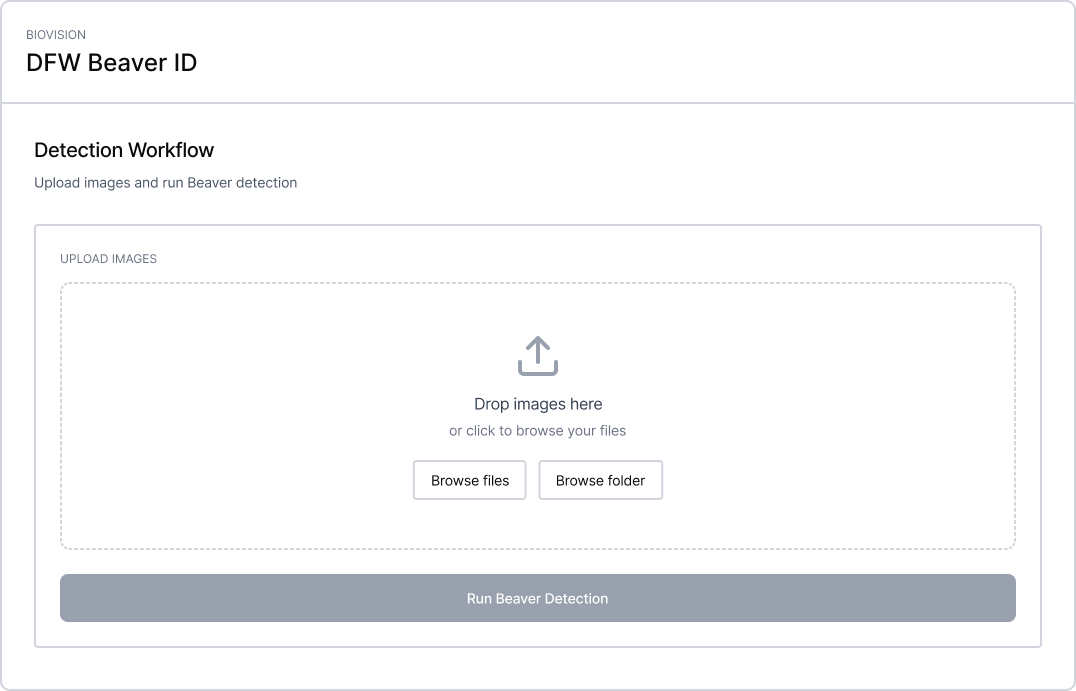

Version 1

A minimal first pass: upload and detect.

The first version let biologists upload images locally and run a beaver detection job. It validated the core concept, but local file upload quickly proved impractical — biologists retrieve hundreds of images at a time.

Problem

Local upload didn't scale to real batch sizes.

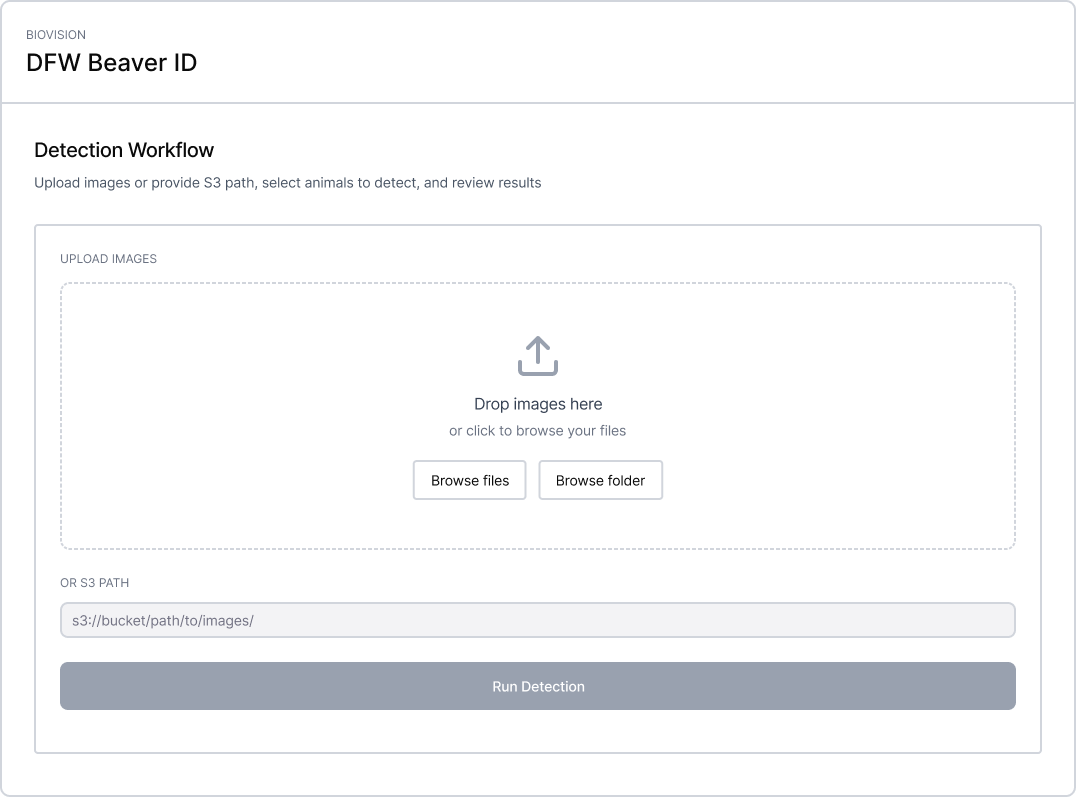

Version 2

We added an S3 path input so biologists could point directly at their existing cloud storage.

Problem

When uploading a large dataset, there was no progress indicator — users assumed the system had crashed.

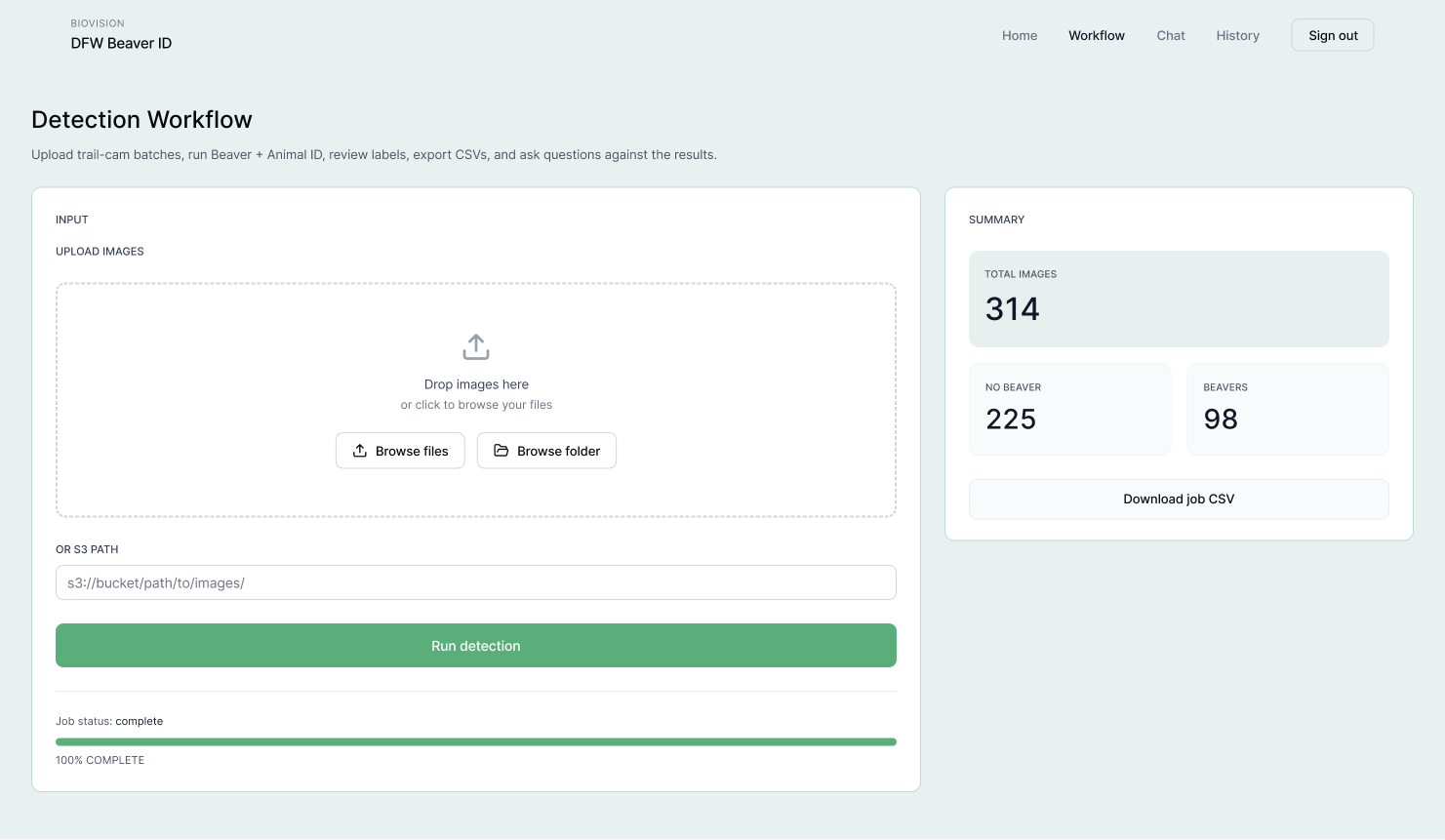

Final Design

Added a progress bar and summary panel so biologists can track job status and see detection results at a glance.

Impact

Reduced manual image review time by 90%, enabling biologists to process hundreds of trail camera images in minutes rather than hours.

02

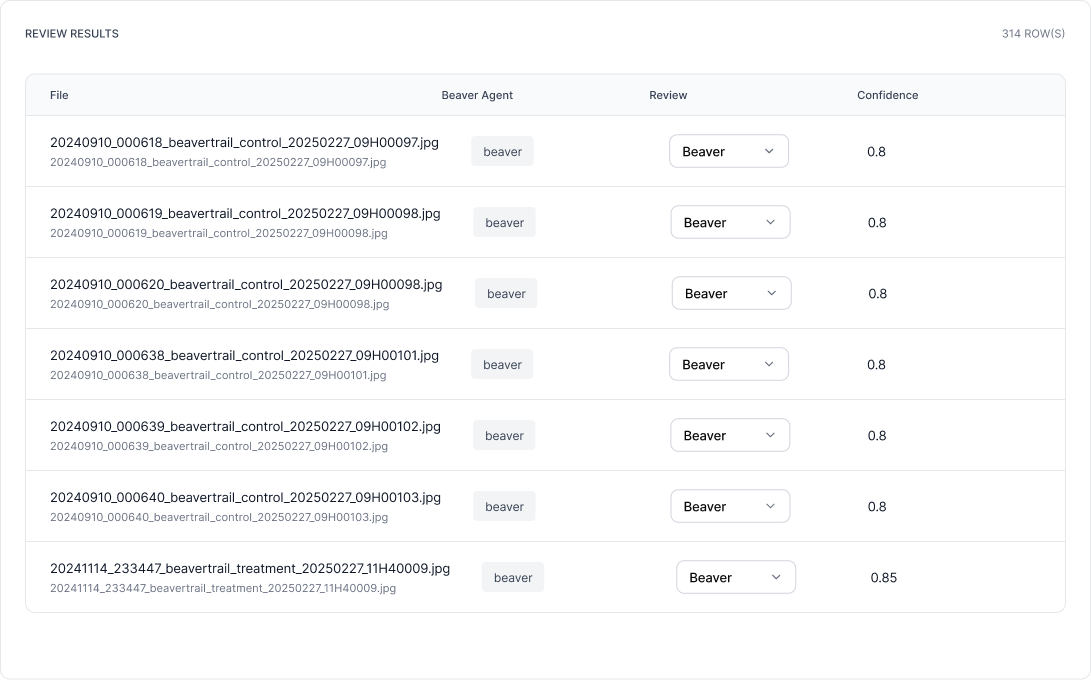

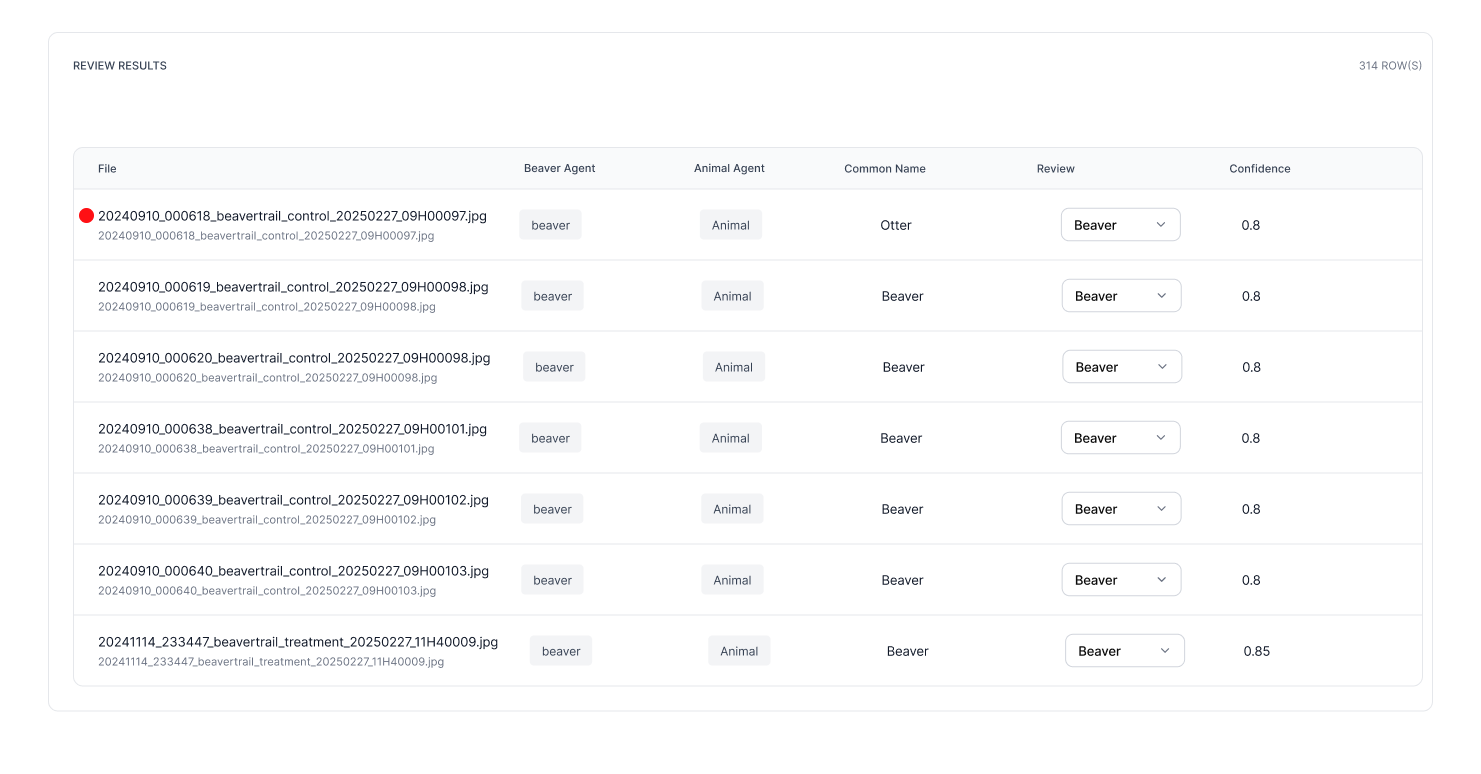

Review Results

Pain point: AI detection results are not trustworthy and require manual verification, but there are no tools to support that process.

Version 1

The first version only showed beaver agent results alongside a basic review table. While it confirmed whether a beaver was present, it offered no way to cross-validate uncertain detections.

Problem

Single agent produced unreliable results on ambiguous images.

Version 2

A second animal detection agent was added. When the two agents returned conflicting outputs, the row was flagged for human review. However, biologists still had to manually locate each flagged image in AWS S3 to verify it.

Problem

No inline image preview — reviewing flagged results required leaving the tool entirely.

Final Design

The final version added an animal detection agent to the summary, giving biologists a clearer breakdown of what was found. An inline image preview was also introduced so biologists can review flagged results directly on the page.

Impact

Detection accuracy improved from 92% to 97% following the introduction of the dual-agent pipeline. Manual correction rate dropped from 40% to 20%.

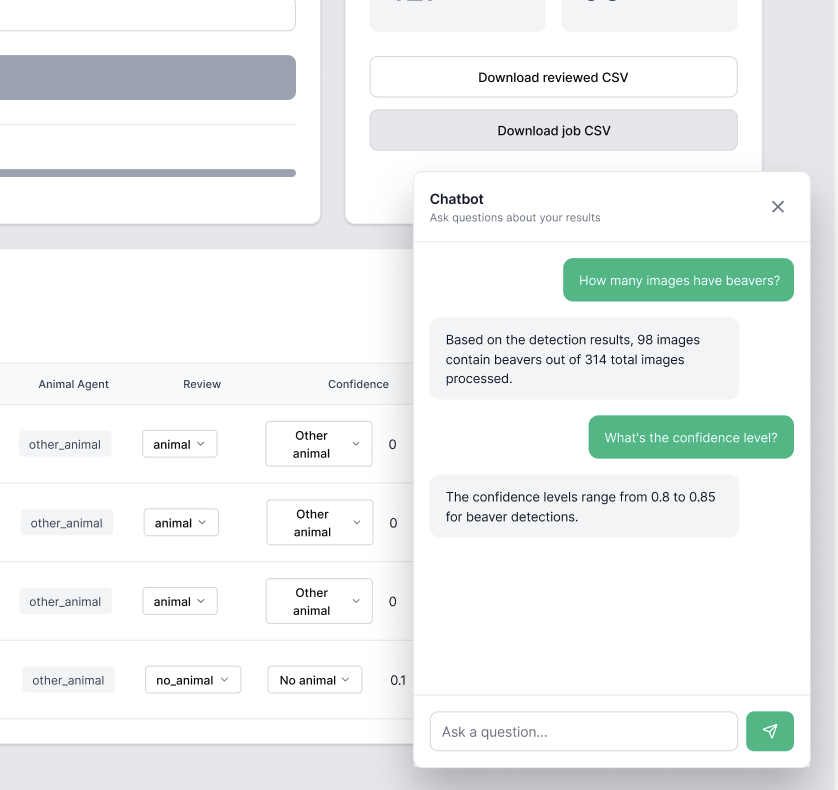

03

AI Chatbot

Pain point: after detection, there are no data analysis tools to make sense of the results.

Version 1

Chatbot embedded in workflow

The chatbot was embedded directly alongside the detection results on the Workflow page, so users could ask questions immediately after a detection run.

Problem

- Mixing two features in one view made the interface too complex

- The chatbot should support uploading historical CSVs, not just the current session

Final Design

The chatbot was moved to a dedicated page so biologists can upload any CSV, including historical data, and query it through natural language without needing Excel or leaving the tool.

Impact

Expanded the tool beyond a single research team. Biologists working with any wildlife species can upload their own CSV and query their data through natural language, making the platform applicable across broader conservation workflows.

The tool outlived the use case — and that's the point

Working on BioVision offered a rare opportunity to apply large vision-language models to a domain where AI adoption is still in its early stages. Wildlife camera trap analysis has traditionally relied on manual review or conventional machine learning, both of which struggle with the realities of field data: low-light grayscale images, partially visible subjects, and visually similar species. Shifting to an AI agent approach proved far more resilient to these conditions, and the biologists from the Washington Department of Fish and Wildlife responded with genuine excitement.

The comparison between traditional ML and the agent-based approach was one of the most instructive parts of the project. Fine-tuned models like YOLOv8 delivered reasonable accuracy on clean data but broke down on edge cases that field conditions routinely produce. The agent approach, by contrast, could reason across ambiguous images without retraining — a critical advantage in a domain where labeled datasets are small and expensive to produce.

Looking ahead, the most meaningful next step would be opening the detection pipeline to user-defined prompts. Currently the tool is built around beaver identification, but the underlying architecture is not species-specific. If biologists could specify their own target species, BioVision could become a general-purpose wildlife monitoring platform applicable across research teams, conservation programs, and ecosystems far beyond the Pacific Northwest.